Introduction

LOW HEALTH LITERACY, when not identified, is associated with poor health outcomes such as unsatisfactory medication compliance, poor disease management, and increased healthcare costs. (Chew et al., 2008; Chima, Abdelaziz, Asuzu, & Beech, 2020; Nehemiah & Reinke, 2020; O’Conor, Moore, & Wolf, 2020; Palumbo, 2017; Rahman, Aziz, Huque, & Ether, 2020; Seidling et al., 2020; Song & Park, 2020) Identifying those with low health literacy could allow for interventions to be put into place to evaluate their effectiveness on the consequences of limited health literacy. For this project, we define health literacy as “the degree to which individuals have the capacity to obtain, process, and understand basic health information and services needed to make appropriate health decisions”(Ratzan and Parker, 2000).(RM, 2000) Currently, there are several validated modalities to assess health literacy (e.g., TOFHLA, REALM, NVS, etc.)(Bass, Wilson, & Griffith, 2003; Chew, Bradley, & Boyko, 2004; Johnson K, 2008; Weiss et al., 2005) However, these tools pose severe time restraints and are not feasible to perform routinely in clinics. (Johnson K, 2008) In addition, there is controversy surrounding the use of clinical health literacy assessments, as some may cause embarrassment for some patients due to a lack of skills. (Chew et al., 2008; Paasche-Orlow & Wolf, 2008)

The three-question screener was validated as identifying limited health literacy in a hospital setting (Chew, 2008) and has since been used as a population-level tool to assess limited health literacy as part of the Behavioral Risk Factor Surveillance System surveys. (Chew et al., 2008)

Results have indicated that the three- question screener can accurately identify limited health literacy. The three-question screener is quick, practical for routine use in clinical settings, and can be administered electronically. Studies have shown that results from the electronic administration of health literacy screeners revealed no significant difference between paper and computer-based surveys (Chesser, Keene Woods, Wipperman, Wilson, & Dong, 2014). However, there currently needs to be studies that compare the validity of the three-question screener to the widely used and validated Short Test of Functional Health Literacy (STOFHLA).

As previously reported, clinician assessments of patient health literacy levels are often inaccurate (Bass, Wilson, Griffith, & Barnett, 2002; Kelly & Haidet, 2007; Powell & Kripalani, 2005). A quick clinical health literacy screening tool could help improve the quality of health care services for those with reduced health literacy. The goal of this study was to validate the three-question screener health literacy tool as a quick and accurate method to identify limited health literacy for use in various settings. The study used scoring described by the Centers for Disease Control (CDC)(Rubin, 2019) to determine three-question screener health literacy levels compared to a validated health literacy assessment tool. Both tools were administered electronically. The methodology included comparing two tools that measured health literacy in different ways, and as such, a difference in results between the two tools was expected. However, the goal was to test if the three-question screening tool would produce comparable results using standardized scoring. The primary objective of this study was to determine if the two screening modalities were comparably valid. The secondary objective was to assess the feasibility and use of findings for future studies in clinical settings.

Methods

Setting and Participant Recruitment

Participants were recruited via a convenience sample from individuals seeking services from social service community-based organizations such as food pantries and self-help groups in a Midwestern state across multiple urban cities. Participants were recruited from January to March 2020. Inclusion criteria included: English speaking, 18 years or older, the ability to read and understand the survey questions, and the ability to use an electronic device. Additionally, each participant did not have to reside in Kansas but had to be currently receiving health care in Kansas to be eligible. Research team members attended in-person meetings and invited potential participants to complete the survey. Participants were then contacted by email, phone, and in person, given a short description of the survey, and invited to complete the voluntary survey. If participants were interested in volunteering, they were sent a hyperlink to the online survey or directed to a website where they could assess the survey. All surveys were completed online via laptop/desktop computer, smartphone, or tablet. Over half of the surveys (n=125) were completed independently and remotely, and 100 surveys were completed with a research team member present. This study was approved by a university Institutional Review Board (IRB) to protect human participants.

Health Literacy Assessment Tools Development

An electronic survey was created combining both the Short Test of Functional Health Literacy (STOFHLA) and the three-question screener. (Keene Woods & Chesser, 2017) The survey began with a brief description of the research study and a consent form for participation. Once participants consented, they were then directed to begin the survey. The survey distribution was randomized (participants either began with the STOFHLA or three-question screener but were not instructed on which survey they would receive first). Each participant completed both surveys (STOFHLA and three-question screener). The transition between surveys was not identifiable to participants. Upon completing the survey, participants were directed to complete a short demographics section and were prompted to answer questions regarding their technology use and current access to health information. Each participant received a $50 gift card upon completion of the study.

Data Analysis

A priori power analysis was conducted based on literature that estimates inadequate health literacy can affect up to a third of the US population. Estimates suggest the prevalence of inadequate health literacy to be around 20-30%. Assuming a null hypothesis and alternative hypothesis of 60% and 80% sensitivity, respectively, and a power level >80%, the estimated sample size was between 150 and 225 participants (Bujang & Adnan, 2016). With the understanding that some participants would not be able to complete each screening tool in its entirety, the research team aimed to recruit 225 participants. Descriptive statistics were described using mean ± standard deviation and count (percentage) for continuous and categorical variables, respectively. Health literacy levels were calculated based on scoring described by the Centers for Disease Control (CDC)(Rubin, 2019). Concordance between the three-question screener and the STOFHLA was determined using McNemar’s test for discordant pairs. A receiver operating characteristic (ROC) curve was used to determine the sensitivity, specificity, and overall ability of the three-question screener to discriminate between adequate and inadequate health literacy. All analyses were conducted using Jamovi (The Jamovi Project, version 1.2.17).

Results

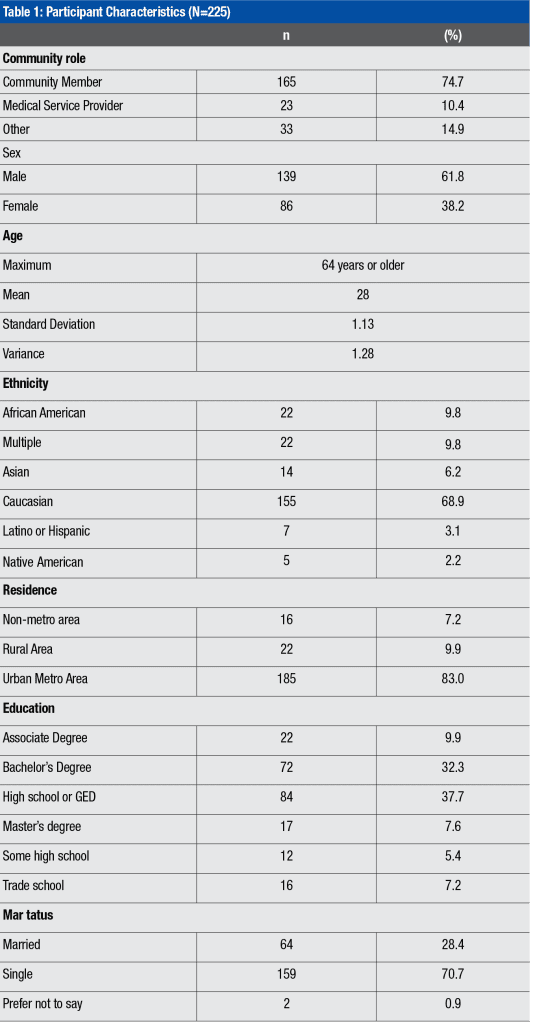

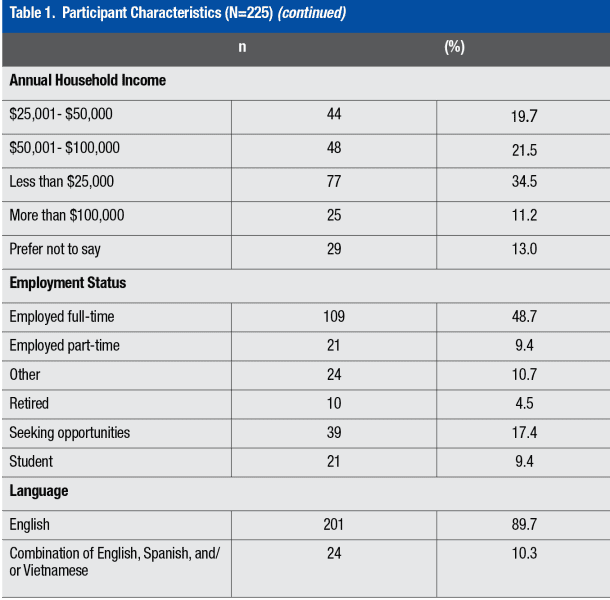

Most participants were 23-30 years old (n=36%), male (n=139, 62%), and the most frequent level of education was a high school diploma/GED (n=84, 38%). The most frequent race reported was Caucasian (n=155, 70%). Most were not married (n=159, 715) and were English-speaking (n=201, 90%) (Table 1).

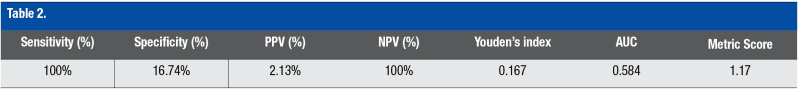

Among the 225 participants, frequencies of inadequate and adequate health literacy as measured by the three-question screener were 83.6% and 16.4%, respectively, compared to the STOFHLA at 2.2% and 97.8%, respectively (Table 2).

Comparison of the Three-Question Screener and STOFHLA

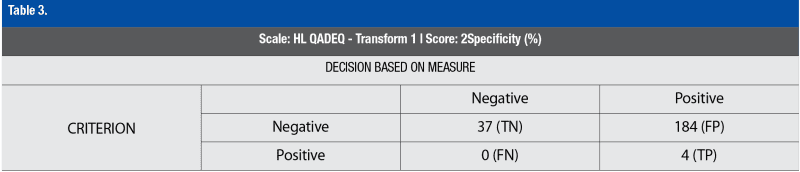

To determine the validity of the three-question screener compared to the STOFHLA at identifying those with inadequate health literacy, a Receiver Operating Characteristic Curve (ROC) was utilized. The three-question screener as compared to the STOFHLA in detecting inadequate health literacy had 100% sensitivity and 16.74 % specificity, with an AUC of 0.58. A McNemar test of discordant pairs between the two health literacy tools was significant (c2=184, p<0.001), as shown in Table 3.

Discussion

Accurately identifying adequate or inadequate health literacy is an important part of improving health outcomes. (Nehemiah & Reinke, 2020; O’Conor et al., 2020) However, the controversy regarding screening for health literacy in the clinical setting remains.(Chew et al., 2004; Paasche-Orlow & Wolf, 2008; Weiss et al., 2005; Welch, VanGeest, & Caskey, 2011) The electronic distribution and assessment of health literacy for this population was successful. This is relevant for assessing health literacy in clinical and population-level settings. Additionally, the use of health literacy questions on an electronic medium is relevant as technology across clinical settings increases. Of the 184 participants who reported having inadequate health literacy by the three-question screener, only 4 were identified as having inadequate health literacy by the STOFHLA. The specificity of the three-question screener was found to be only 16.74%. When comparing the two tools, the three-question screener did not falsely mark any participants as having adequate health literacy, while the STOFHLA identified them as inadequate. The three-question screener did well to identify participants with inadequate health literacy accurately but lacked specificity. Using the three-question screener in a clinic setting would accurately identify patients with inadequate health literacy but would also likely falsely label a patient with adequate health literacy as having inadequate health literacy. Therefore, our results indicate a need for more accuracy in indicating the health literacy rate. While using the three-question screener in a clinical setting may provide a quick means for accurately assessing low health literacy levels, these health literacy assessment tools may need to be used in combination with other tools and patient support, such as additional health education.

Limitations

While this study is novel in its approach to comparing a standardized health literacy assessment tool against the three-question screening tool, as with all studies, this study has limitations. First, the population was primarily middle-aged Caucasian men from an urban area. However, there was representation from the African American community that matched the area’s demographics. The first one hundred surveys were collected under the supervision of research assistants. At the same time, the remainder was completed remotely due to the convenience of using community sites to assist with data collection. This may have compromised the fidelity of the explanation of the purpose of this study. However, the results provided are reliable due to the electronic administration and randomization of the order of the instruments. Additionally, as with all self-report data (Frawley, 1988), respondents could have over or under-reported their skill level for the three-question screening tool.

It should also be noted that different health literacy assessment tools have been shown to measure different constructs. (Morrison, Schapira, Hoffmann, & Brousseau, 2014) The three-question screening tool asks participants to self-assess their abilities to obtain advice from a health professional and understand oral and written health information. The STOFHLA is a series of knowledge questions scored to interpret the participant’s health literacy skills based on knowledge. Therefore, participants may be under or over-estimating their skills based on various personal factors and a sense of agency within the healthcare context.

Implication for Practice

This study was conducted in a community-based setting. As such, we could assess health literacy in several locations convenient for the population. We propose that the three-question screening tool may be accessible for use in various settings, not simply the clinical environment. Clinical health service providers are often unaware of their patients’ low health literacy. The brief health literacy screening questions previously used by the CDC provide sufficient information about the likelihood of low health literacy to consider the brief health literacy screening questions for use across multiple clinical care settings. One way to implement the questions is to use the three-question screening tool for the first assessment and follow up with patients with lower scores with an in-depth assessment using the full TOFHLA or a similar, more robust tool. Additionally, providing additional health information (at the appropriate reading level, etc.) and increasing communication strategies with patients with lower health literacy skills continue to be important for all health providers to meet the growing needs of diverse patient populations.

References

Bass, P. F., 3rd, Wilson, J. F., & Griffith, C. H. (2003). A shortened instrument for literacy screening. J Gen Intern Med, 18(12), 1036-1038. doi:10.1111/j.1525-1497.2003.10651.x

Bass, P. F., 3rd, Wilson, J. F., Griffith, C. H., & Barnett, D. R. (2002). Residents’ ability to identify patients with poor literacy skills. Acad Med, 77(10), 1039-1041. doi:10.1097/00001888-200210000-00021

Bujang, M. A., & Adnan, T. H. (2016). Requirements for Minimum Sample Size for Sensitivity and Specificity Analysis. J Clin Diagn Res, 10(10), YE01-YE06. doi:10.7860/JCDR/2016/18129.8744

Chesser, A. K., Keene Woods, N., Wipperman, J., Wilson, R., & Dong, F. (2014). Health Literacy Assessment of the STOFHLA: Paper versus electronic administration continuation study. Health Educ Behav, 41(1), 19-24. doi:10.1177/1090198113477422

Chew, L. D., Bradley, K. A., & Boyko, E. J. (2004). Brief questions to identify patients with inadequate health literacy. Fam Med, 36(8), 588-594.

Chew, L. D., Griffin, J. M., Partin, M. R., Noorbaloochi, S., Grill, J. P., Snyder, A., Vanryn, M. (2008). Validation of screening questions for limited health literacy in a large VA outpatient population. J Gen Intern Med, 23(5), 561-566. doi:10.1007/s11606-008-0520-5

Chima, C. C., Abdelaziz, A., Asuzu, C., & Beech, B. M. (2020). Impact of Health Literacy on Medication Engagement Among Adults With Diabetes in the United States: A Systematic Review. Diabetes Educ, 46(4), 335-349. doi:10.1177/0145721720932837

Frawley, P. J. (1988). The validity of self-report data. J Stud Alcohol, 49(5), 479-480. doi:10.15288/jsa.1988.49.479

Johnson K, W. B. (2008). How long does it take to assess literacy skills in clinical practice? . J Am Board Fam Med, 21(3), 211-214. doi:10.3122/jabfm.2008.03.070217

Keene Woods, N., & Chesser, A. K. (2017). Validation of a Single Question Health Literacy Screening Tool for Older Adults. Gerontol Geriatr Med, 3, 2333721417713095. doi:10.1177/2333721417713095

Kelly, P. A., & Haidet, P. (2007). Physician overestimation of patient literacy: a potential source of health care disparities. Patient Educ Couns, 66(1), 119-122. doi:10.1016/j.pec.2006.10.007

Morrison, A. K., Schapira, M. M., Hoffmann, R. G., & Brousseau, D. C. (2014). Measuring health literacy in caregivers of children: a comparison of the newest vital sign and S-TOFHLA. Clin Pediatr (Phila), 53(13), 1264-1270. doi:10.1177/0009922814541674

Nehemiah, A., & Reinke, C. E. (2020). Simply speaking: The importance of health literacy for patient outcomes. Am J Surg. doi:10.1016/j.amjsurg.2020.08.005

O’Conor, R., Moore, A., & Wolf, M. S. (2020). Health Literacy and Its Impact on Health and Healthcare Outcomes. Stud Health Technol Inform, 269, 3-21. doi:10.3233/SHTI200019

Paasche-Orlow, M. K., & Wolf, M. S. (2008). Evidence does not support clinical screening of literacy. J Gen Intern Med, 23(1), 100-102. doi:10.1007/s11606-007-0447-2

Palumbo, R. (2017). Examining the impacts of health literacy on healthcare costs. An evidence synthesis. Health Serv Manage Res, 30(4), 197-212. doi:10.1177/0951484817733366

Powell, C. K., & Kripalani, S. (2005). Brief report: Resident recognition of low literacy as a risk factor in hospital readmission. J Gen Intern Med, 20(11), 1042-1044. doi:10.1111/j.1525-1497.2005.0220.x

Rahman, F. I., Aziz, F., Huque, S., & Ether, S. A. (2020). Medication understanding and health literacy among patients with multiple chronic conditions: A study conducted in Bangladesh. J Public Health Res, 9(1), 1792. doi:10.4081/jphr.2020.1792

Ratzan, S. C., Parker, R. M., Selden, C., & Zorn, M. (2000). National library of medicine current bibliographies in medicine: health literacy. Bethesda, MD: National Institutes of Health, US Department of Health and Human Services, 331-337. Retrieved from (PDF) National Library of Medicine Current Bibliographies in Medicine: Health Literacy (researchgate.net)

RM, R. S. a. P. (2000). Introduction. In S. M. Selden CR, Ratzan SC et al. (Ed.), National Library of medicine current bibliographies in medicine: health literacy (pp. 4). Bethesda, MD: National Institues of Health, U.S. Department of Health and Human Services.

Rubin, D. a. N., S. (2019). Report on 2016 BRFSS Health Literacy Data. Retrieved from https://www.cdc.gov/healthliteracy/pdf/Report-on-2016-BRFSS-Health-Literacy-Data-For-Web.pdf

Seidling, H. M., Mahler, C., Strauss, B., Weis, A., Stutzle, M., Krisam, J., . . . Haefeli, W. E. (2020). An Electronic Medication Module to Improve Health Literacy in Patients With Type 2 Diabetes Mellitus: Pilot Randomized Controlled Trial. JMIR Form Res, 4(4), e13746. doi:10.2196/13746

Song, M. S., & Park, S. (2020). Comparing two health literacy measurements used for assessing older adults’ medication adherence. J Clin Nurs. doi:10.1111/jocn.15468

Weiss, B. D., Mays, M. Z., Martz, W., Castro, K. M., DeWalt, D. A., Pignone, M. P., . . . Hale, F. A. (2005). Quick assessment of literacy in primary care: the newest vital sign. Ann Fam Med, 3(6), 514-522. doi:10.1370/afm.405

Welch, V. L., VanGeest, J. B., & Caskey, R. (2011). Time, costs, and clinical utilization of screening for health literacy: a case study using the Newest Vital Sign (NVS) instrument. J Am Board Fam Med, 24(3), 281-289. doi:10.3122/jabfm.2011.03.100212